DeepSeek-V4 Launched, Then Cut Prices Twice in a Row

The model is the headline. The pricing is the strategy.

DeepSeek-V4 was released, and then it cut prices twice in a row.

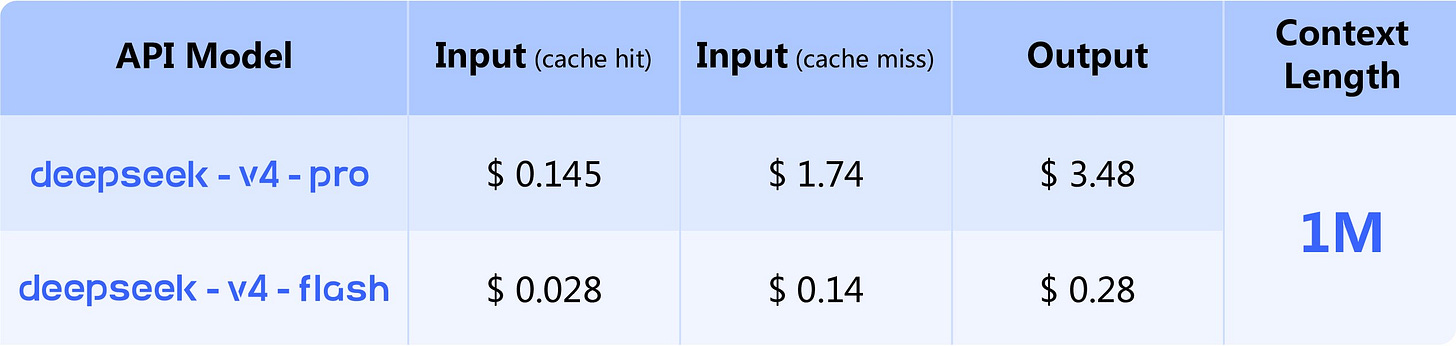

After the release, OpenClaw quickly added support for it. From the pricing structure, Flash is actually extremely cheap, while Pro is relatively expensive. The gap between the two is around 12x.

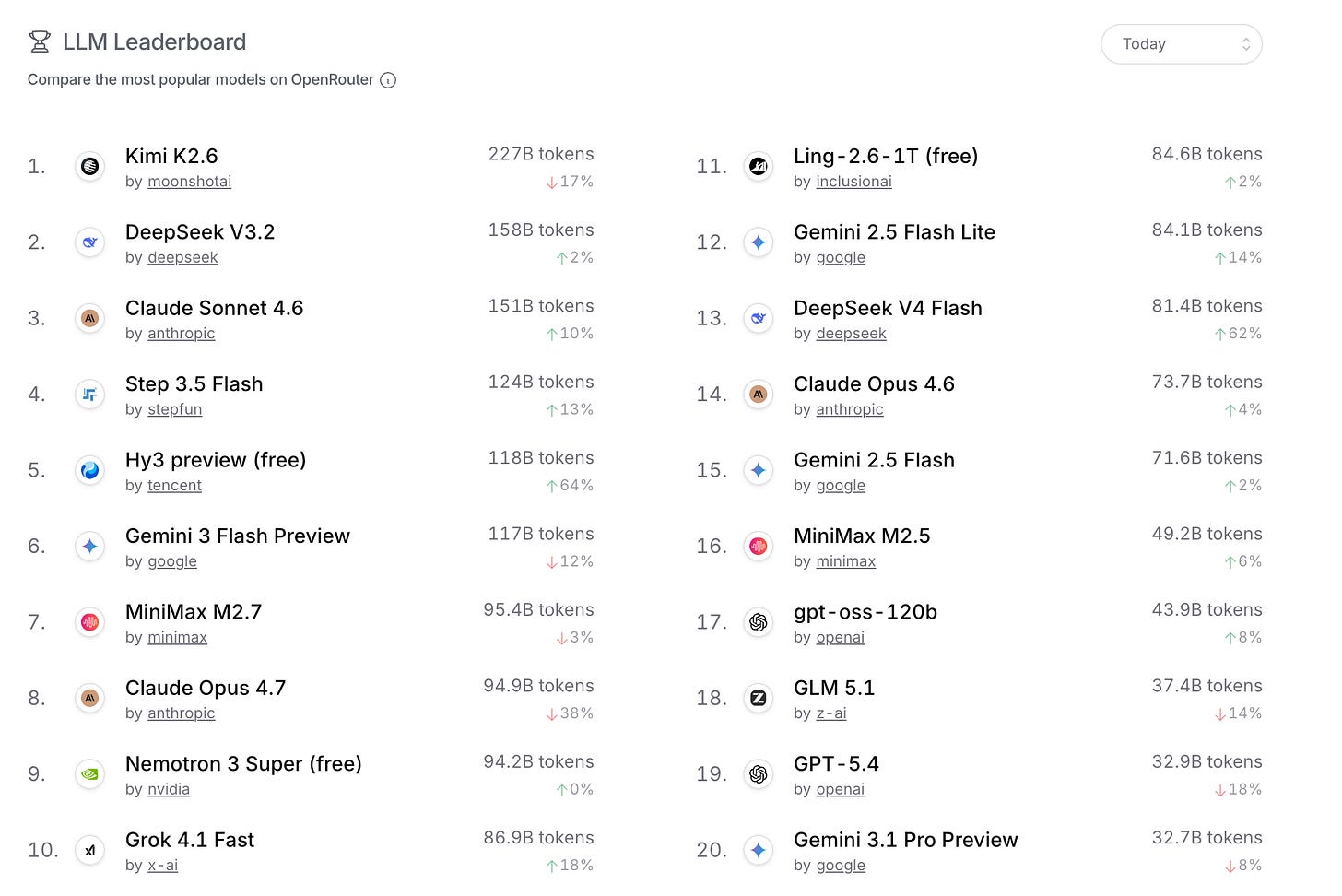

V4 also quickly appeared on OpenRouter’s usage ranking, which shows that people were already trying it in real workflows.

Then, on the second day, DeepSeek launched a 75% discount for V4-Pro API.

The DeepSeek-V4-Pro API is now available at a limited-time 75% discount, ending at 15:59 UTC on May 5, 2026.

My guess is that the initial pricing of Pro was indeed a bit too high. For many people doing coding or agentic coding, they would naturally use Pro or Pro Max-like settings. After trying it for a day, it could easily cost dozens of RMB. Compared with a fixed coding plan, that is simply too expensive.

DeepSeek may have noticed this quickly, so it introduced the limited-time discount.

This also puts OpenRouter in an awkward position. If OpenRouter cannot adjust prices in time, many users will simply go back to the official API.

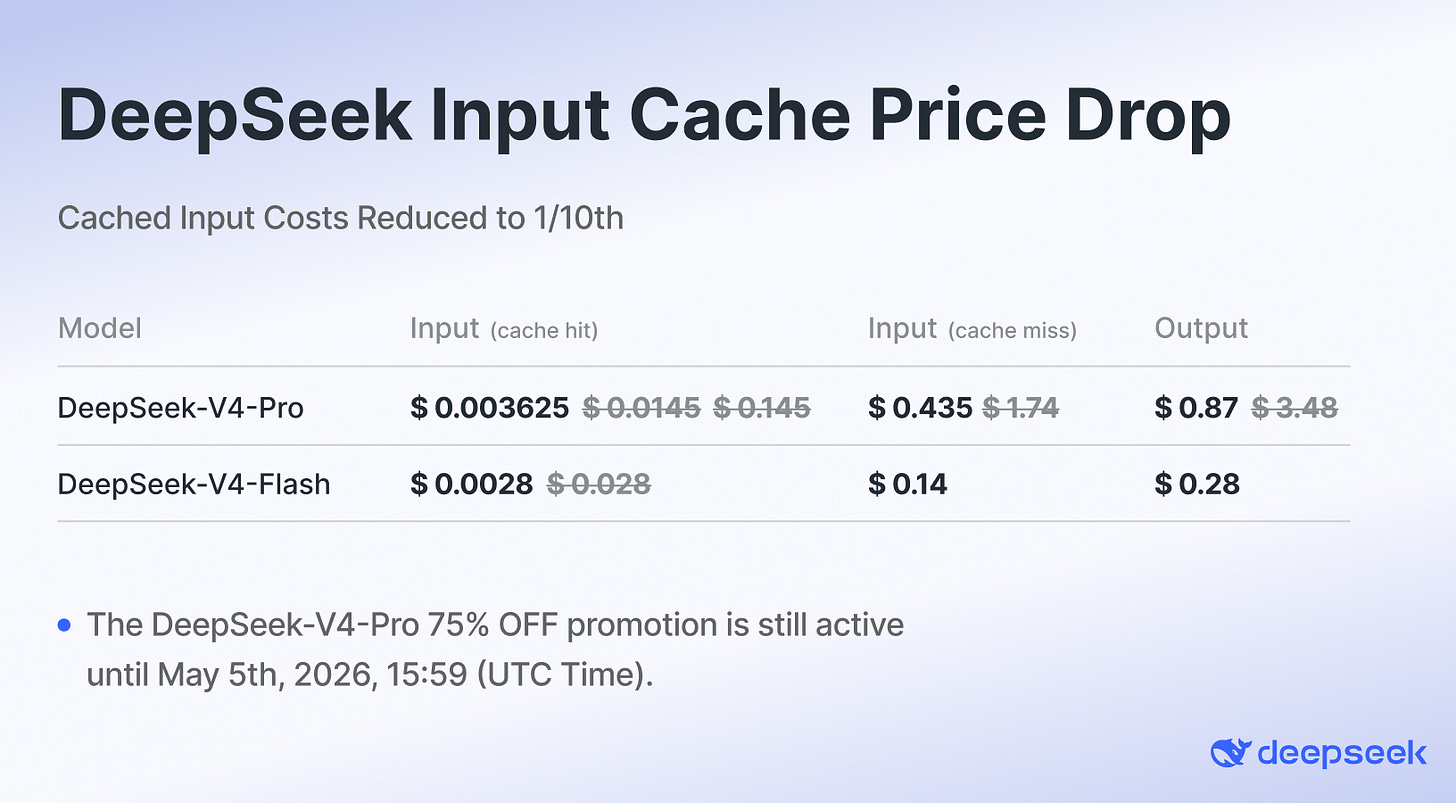

Then, on the third day, DeepSeek announced another price cut:

Starting immediately, the input cache hit price for all DeepSeek API products has been reduced to one-tenth of the original price. Build applications more efficiently at a lower cost.

This cache price cut is the real killer move.

Once the cache price drops to one-tenth of the original level, few vendors can really follow. DeepSeek is clearly starting to grab market share. My feeling is that this price may actually be close to the normal price level after Huawei’s inference servers begin shipping at scale.

It almost feels intentional.

First, set the price high.

Then cut it twice.

Get trending twice.

And finally land at a price point that looks extremely competitive.

The result is that DeepSeek-V4 suddenly looks like one of the highest cost-performance options on the market.

This is not just a model release.

It is a pricing campaign, a developer acquisition campaign, and probably the beginning of a new round of inference cost competition.